|

Donghyeon Soon I am a final-year undergraduate student at DGIST, South Korea, and a research intern at the Intelligent Systems and Learning Lab, advised by Daehee Park. I also work closely with Kyungdon Joo at UNIST and Hezhen Hu at UT Austin. My research focuses on 3D vision and generative modeling, particularly in 3D/4D reconstruction and parametric modeling of humans and animals. |

|

ResearchMy research focuses on developing models for reconstructing, animating, and generating dynamic 3D and 4D content, especially for animals and humans. My work combines synthetic data pipelines, parametric animal and human models, and generative methods to turn 2D images and videos into animatable 3D/4D representations. I enjoy building tools and models that expand the creative and scientific possibilities of computer vision and graphics. |

|

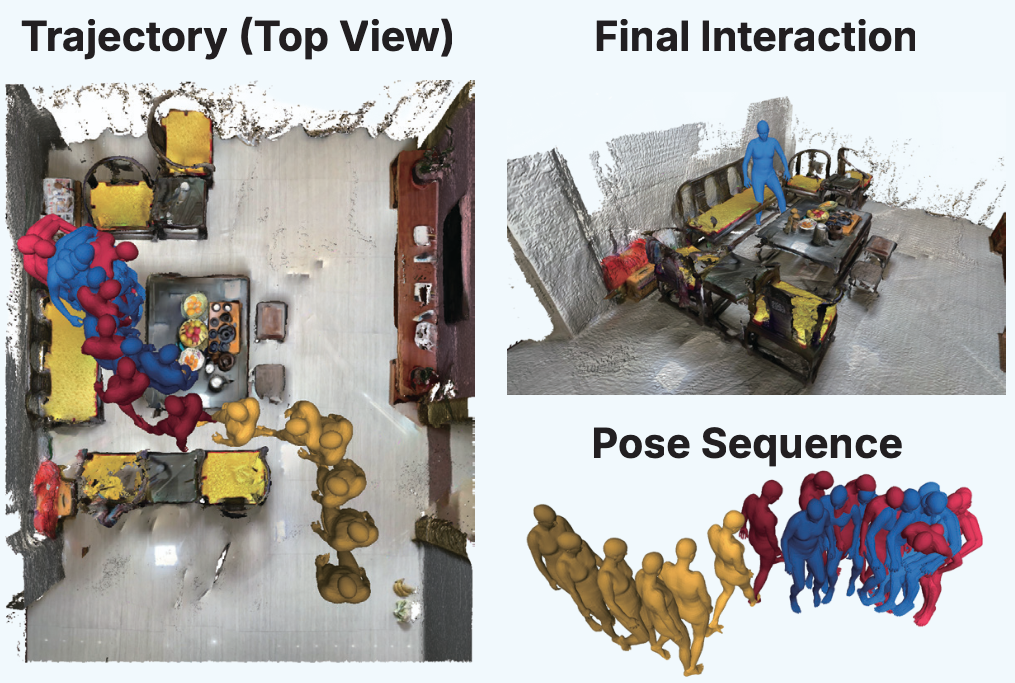

Scene-aware Human Motion Prediction Project Under review A framework for scene-aware human motion prediction that captures interaction with the environment to improve future trajectory and pose estimation. |

|

Feed-Forward Animatable Animal Reconstruction In preparation A feed-forward approach for animatable animal reconstruction, recovering geometry and articulation from minimal inputs without iterative optimization. |

|

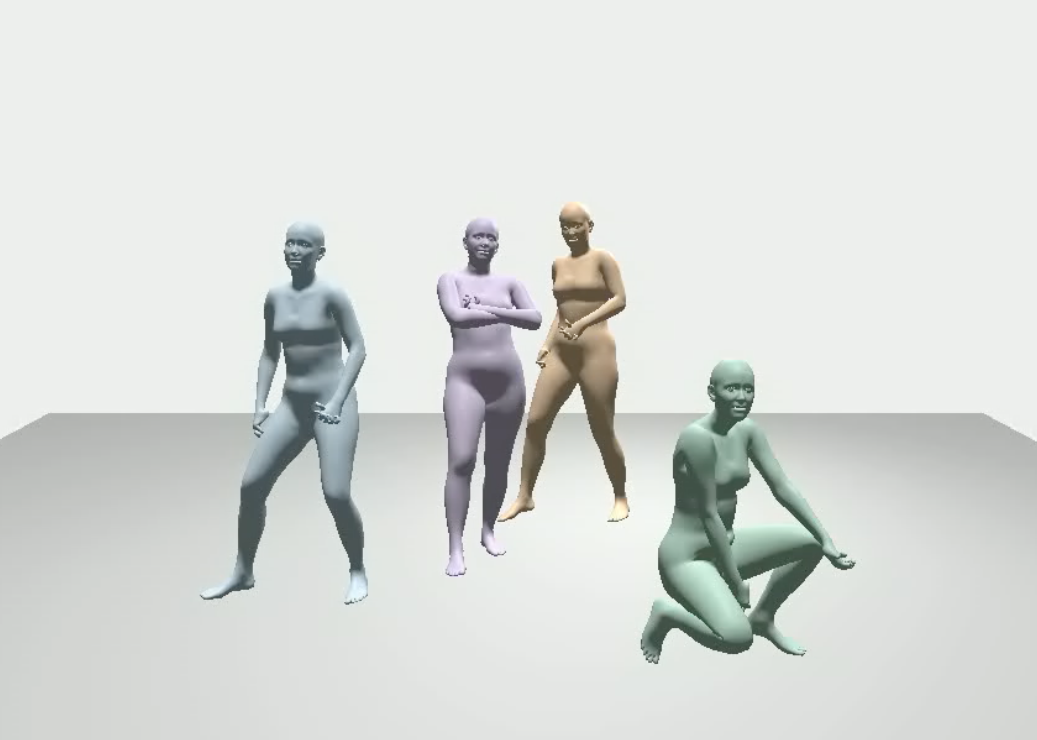

Expressive Multi-Dancer Dataset Under review A large-scale expressive multi-dancer dataset with automated annotation for world-grounded multi-person SMPL-X motion, including detailed hand articulation, facial expressions, audio, and structured text descriptions. |

|

WildAni4D: Towards 4D Animal Mesh Reconstruction Gyeongsu Cho, Hezhen Hu, Donghyeon Soon, Changwoo Kang, Kyungdon Joo CVPR 2026 (Findings) [paper] / [project page] We present a synthetic animal video pipeline and a video transformer model for reconstructing temporally coherent 4D animal motion and global trajectories from monocular in-the-wild videos. |

|

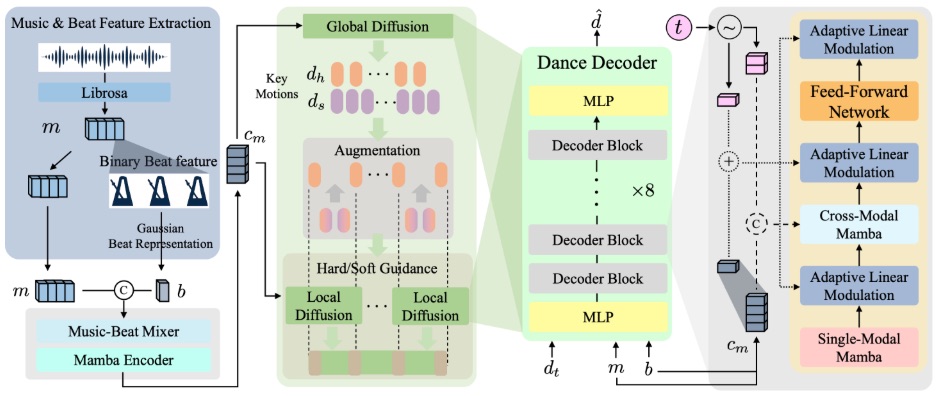

Not Like Transformers: Drop the Beat Representation for Dance Generation with Mamba-Based Diffusion Model Sangjune Park, Inhyeok Choi, Donghyeon Soon, Youngwoo Jeon, Kyungdon Joo† WACV 2026 Also presented at ICCVW 2025 Workshop on Generative Models for Audio-Visual Creation (Gen4AVC) [paper] / [project page] We propose a Mamba-based diffusion framework for music-driven dance generation with a redesigned beat representation for better motion modeling and rhythm alignment. |

|

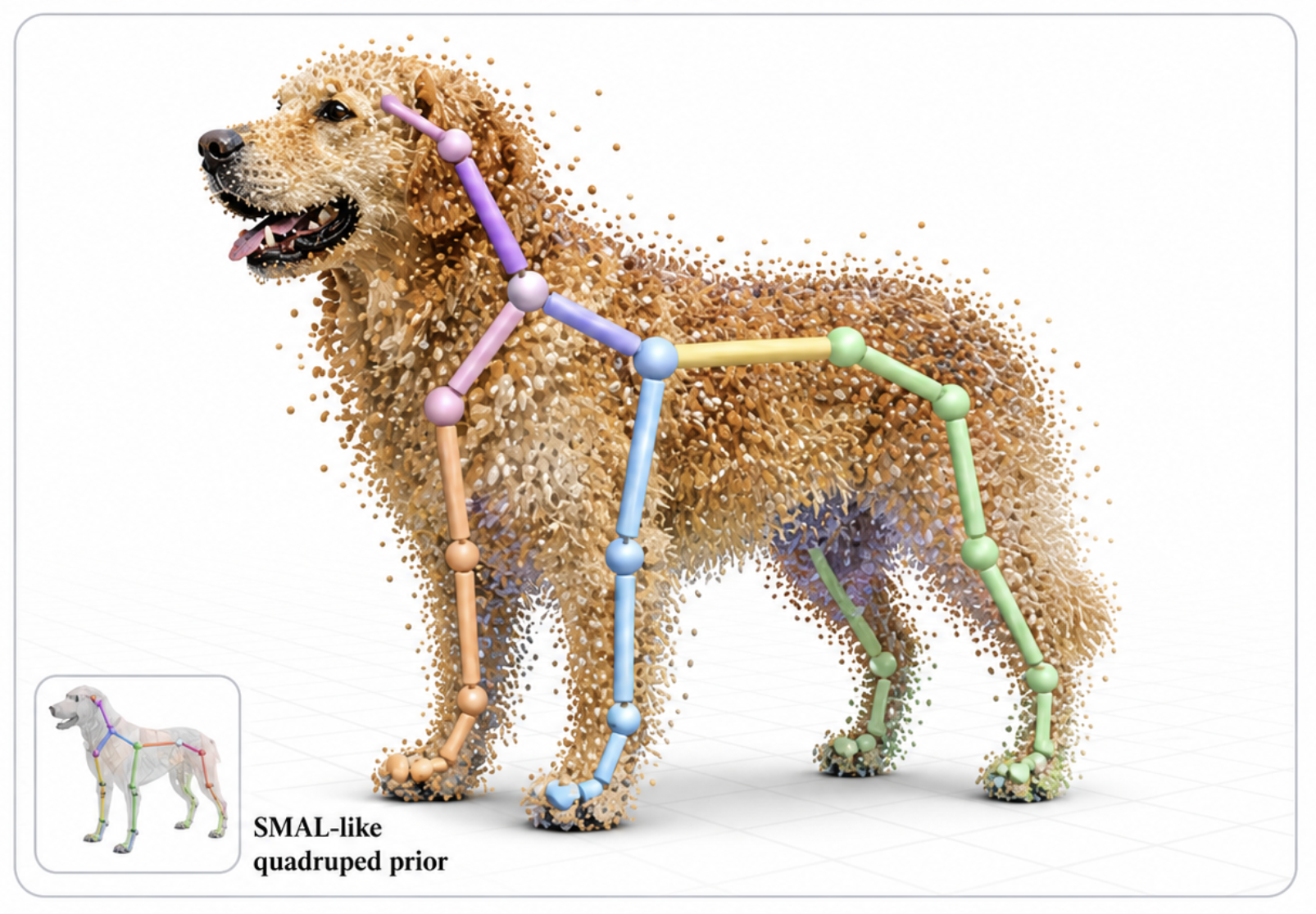

DogRecon: Canine Prior-Guided Animatable 3D Gaussian Dog Reconstruction From a Single Image Gyeongsu Cho, Changwoo Kang, Donghyeon Soon, Kyungdon Joo† International Journal of Computer Vision (IJCV), 2025 Also presented at CVPR 2024 Workshop on Computer Vision for Animals (CV4Animals) [paper] / [project page] DogRecon reconstructs an animatable 3D Gaussian dog from a single RGB image by incorporating canine priors and robust geometry-aware optimization. |

| [ Selected domestic papers ] | |

|

Foothold Planning

Taebok Lee*, Donghyeon Soon*, Kyungdon Joo† ICROS, 2026 Under Review |

|

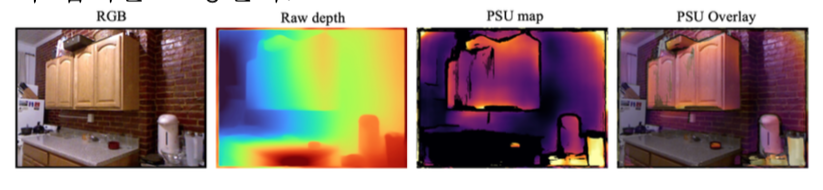

Improving Geometric Processing with Plane-aware Perturbation Uncertainty and Inference-time Control for Monocular Depth Estimation

Donghyeon Soon*, Minseok Oh*, Sangchul Lee† Korea Artificial Intelligence Conference, 2026 We propose a training-free inference-time control framework that estimates perturbation sensitivity uncertainty from monocular depth predictions and uses it for selective refinement and reliable 3D point filtering, improving downstream plane-based geometric processing. |

|

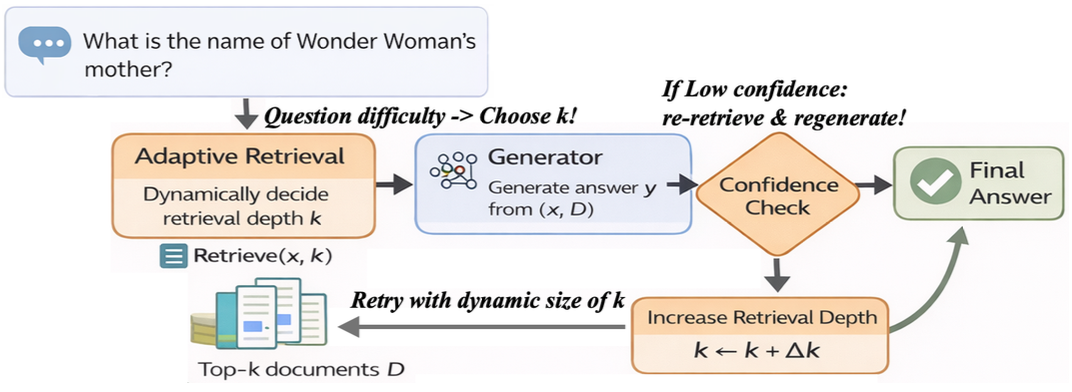

CC-RAG: Confidence-Controlled Retrieval-Augmented Generation

Donghyeon Soon*, Minseok Oh*, Sangchul Lee† Korea Artificial Intelligence Conference, 2026 We present a training-free inference-time control method for retrieval-augmented generation that combines rule-based confidence estimation with adaptive top-k retrieval to improve answer reliability and reduce low-confidence failures. |

|

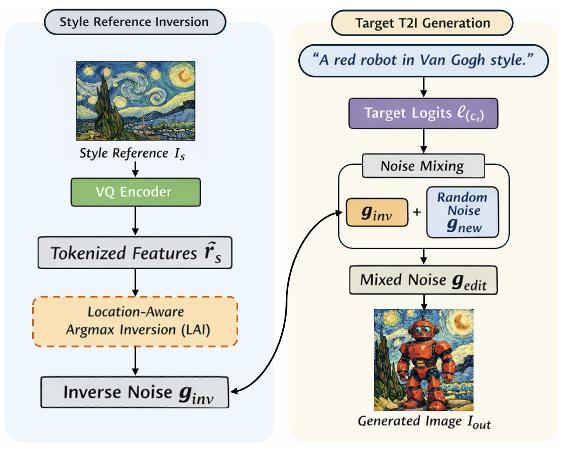

Next-Scale Inversion Based Reference-Guided T2I Style Transfer

Minseok Oh*, Donghyeon Soon*, Sangchul Lee† Korea Artificial Intelligence Conference, 2026 🏆 Outstanding Paper Award We propose a training-free reference-guided text-to-image style transfer method that extracts a style carrier via next-scale inversion and injects it during autoregressive generation, enabling controllable stylization while preserving prompt-driven structure. |

|

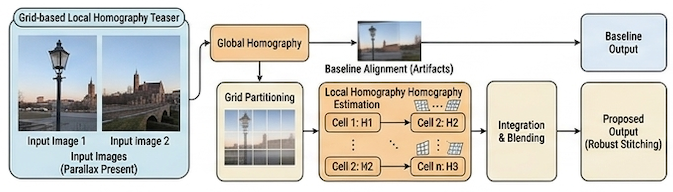

Grid-based Local Homography Model for Panorama Stitching under Parallax

Donghyeon Soon, Minseok Oh, Taebok Lee Korea Artificial Intelligence Conference, 2026 This work analyzes the limitations of global homography under parallax and introduces a grid-based local homography model for robust panorama stitching. |

|

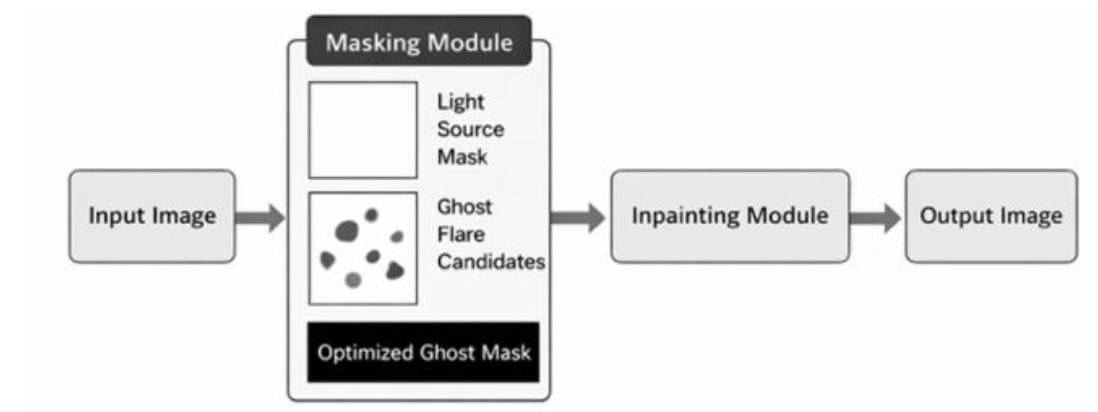

Ghost Flare Localization and Removal Via Convex Sparse Template Selection

Minseok Oh*, Donghyeon Soon*, Sangchul Lee† Korea Artificial Intelligence Conference, 2026 We propose a training-free method for ghost flare localization and removal in smartphone images. Our approach generates light-source-based ghost template candidates and selects a minimal subset via convex sparse optimization, producing compact masks that suppress over-masking and enable selective local inpainting for artifact removal. |

|

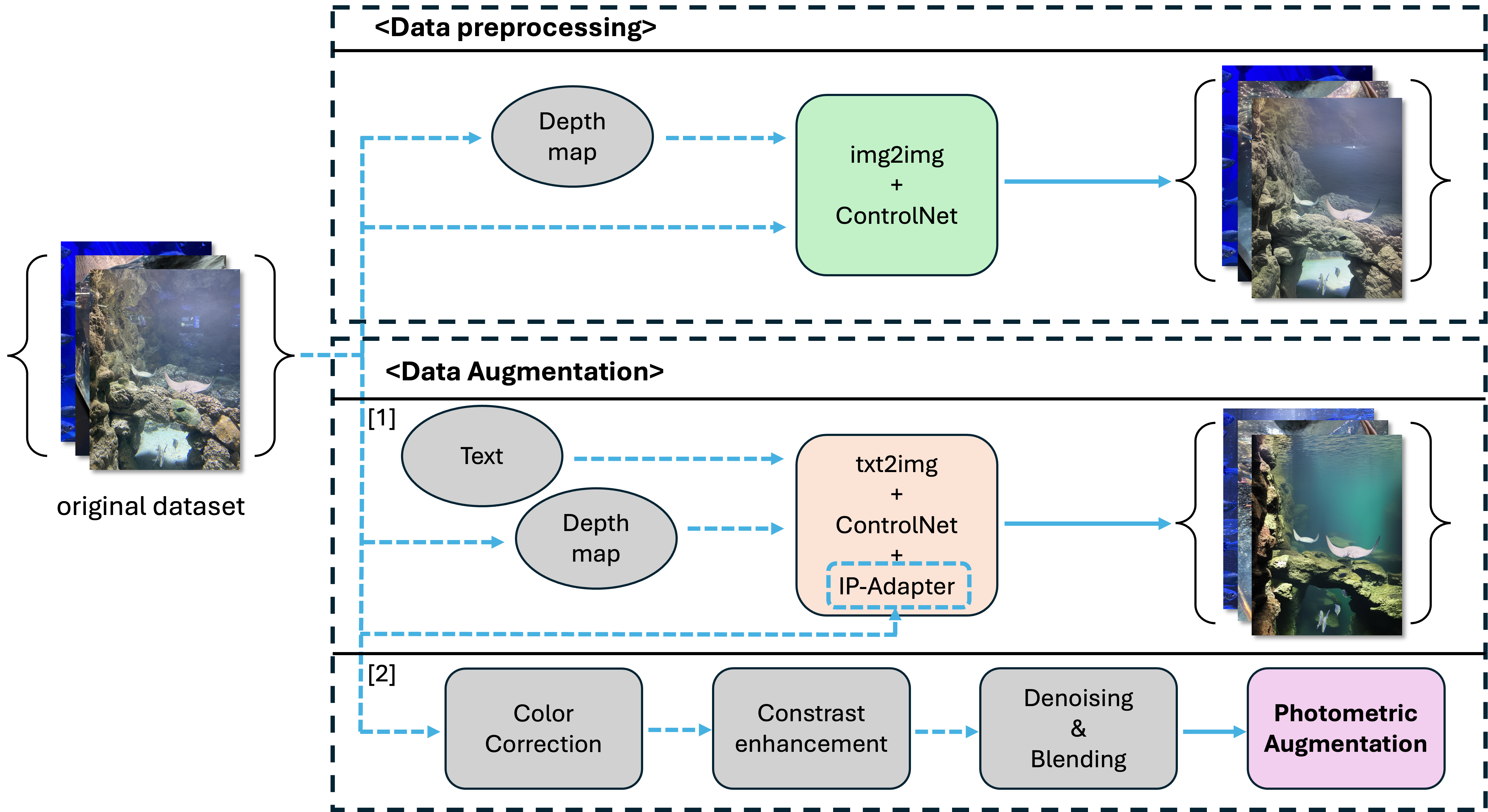

Enhancing Underwater Object Detection via Conditional Diffusion Model based Preprocessing and Augmentation

Donghyeon Soon*, Taebok Lee* Korea Artificial Intelligence Conference, 2025 [paper] 🏆 Outstanding Paper Award We propose a conditional diffusion-based preprocessing and augmentation pipeline to improve underwater object detection under challenging visibility conditions. |

Education

|

Experience

If you'd like to discuss potential collaborations or have any questions, please feel free to contact me at dhsoon@dgist.ac.kr. |

|

Template from this website. |